Entanglements

What is entanglement?

During the prototype generation process we extract a lot of information from the model, including which other classes share features with the class prototype that we're generating.

Depending on your domain, some entanglement may be expected - for example, an animal classifier is likely to have significant entanglement between 'cat' and 'dog', because those classes share (at least) the 'fur' feature. However, entanglement - especially unexpected entanglement, that doesn't make sense in your domain - can also indicate where your model might make misclassifications in deployment.

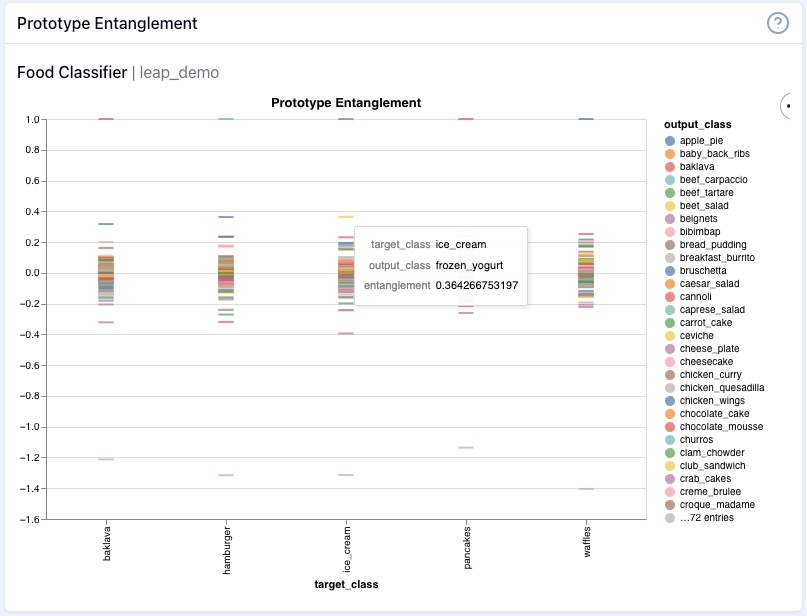

This is the entanglement chart of our food classifier for the selected classes baklava, hamburger, ice cream, pancakes, and waffles:

On the left you see the level of entanglement. A positive value indicates there there is overlap between the classes, with a score of 1 indicating complete entanglement with the given class. On the bottom you see the selected target class. When you hover over a class line in the chart a box pop-out will show you the target class, the output class it is entangled with, and the level of entanglement. Frozen yogurt is the most entangled class with the ice cream class. Not surprising. But with this new information, I want to test my model and see if this entanglement may be a problem. I'll show you how in the next section on isolations.